If luck, as Louis Pasteur noted, favors the prepared mind, how will oncologists not only prepare but extend cognitive capacity in an era where both medical knowledge and the scope of human endeavor that physicians must address continue to increase exponentially? One approach is to go narrow and deep, focusing our education and work in a subspecialized area of oncology. Once a model found only in academic centers, the complexity of medical decision-making and the Big Bang expansion of science resulting from precision medicine has influenced the next generation of oncologists to seek subspecialization. Greater specialization allows one to gain confidence in the mastery of the relevant knowledge base, to gain comfort through a set of clinical decision-making solutions germane to a specific population of patients with cancer, and to provide focus for one’s continuing education and clinical trial participation. However, when something is gained, invariably something is lost, as Heisenberg’s Uncertainty Principle mathematically articulated. A broader clinical practice supports intellectual stimulation through cross-fertilization of ideas as advances in clinical oncology tend to be uneven, with some fields in stasis waiting for the next big thing to develop while other fields experience a burst of discovery and innovation. At Hartford HealthCare Cancer Institute, we have over 40 medical oncologists on faculty, about evenly distributed between those who are subspecialized and those who describe themselves as generalists. It’s important to understand that subspecialization is not limited by anatomy; we have subspecialists in geriatric oncology, disparities, survivorship, palliative care, and quality improvement. The underlying science of neoplasia and personalized, patient-centric care of patients with cancer are both fundamentally general in the sense of applicability and relevance to all types of cancer.

Preparing the mind also means opening the mind up to new perspectives by putting aside ingrained assumptions of the world, which too often are embedded with biases. It may be healthy on occasion to momentarily step outside of one’s field, expand our intellectual horizon, and look up at the stars. To spend some intellectual capital in another world, suspend our conventional beliefs, and heed the advice Hamlet imparted to Horatio: “And therefore as a stranger give it welcome. There are more things in heaven and earth, Horatio, than are dreamt of in your philosophy.”

On the recommendation of a pathology colleague, I recently read Brian Cox and Jeff Forshaw’s The Quantum Universe (And Why Anything That Can Happen, Does), an introduction for the scientifically inclined non-physicist to the highly non-intuitive implications of quantum physics. The opening chapters lead us through the transition confronting physicists of the early 20th century as they were intellectually challenged to work through new concepts and models demanded by the precision of mathematics and sub-molecular and subatomic observations of the world. Quantum physics predicts that our sun will become a white dwarf star in 5 billion years, a convenient statement since none of us will be around to confirm that. But it also provides the theory that led to the invention of the solid state transistor and the silicon chip that have led to advances in artificial intelligence that now promise to extend the limits of human cognition. For 300 years, we had understood the universe through the constructs of Newtonian physics, governed by Isaac Newton’s three laws, but those laws would not have predicted these advances and by necessity required a radically new view. (I digress to mention that a 300-year-old apple tree on Newton’s family estate thought to be “the tree” continues to bear fruit today, and that George Sledge taught me Newton’s Fourth Law: One cookie, one fig.) What does endure is the scientific method of discovery that Newton’s work defined for us.

The scientific method is a virtuous cycle starting with an observation which leads to a theory to expand our understanding of the observed fact and that could be proven false if a predicted outcome is not observed (the null hypothesis). Since we desire that our mind be ever prepared for new knowledge, the theory can never achieve absolute certainty; only the probability that the model is valid increases if the null hypothesis is rejected. The process by which an observation generates a theory or model is inductive reasoning. Designing an experiment to prove the null hypothesis is deductive reasoning and together inductive and deductive reasoning form the virtuous cycle of the scientific method.

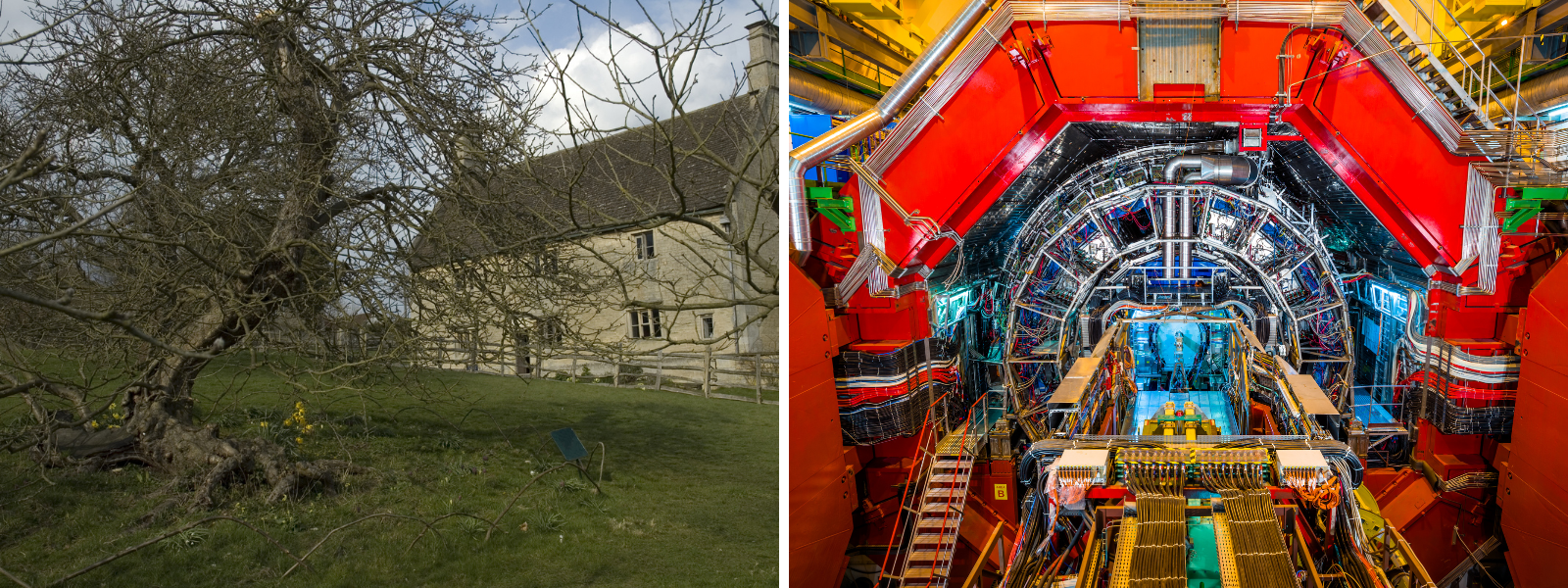

In medicine, we talk of observational or real-world data as data derived from routine health care operations and experimental data as data arising from controlled experiments performed through prospective clinical studies, a distinction that is not made as strongly, or emotionally, in physics. The movement of celestial bodies is not valued less because it occurs without our intervention. Observational data is a component of both inductive and deductive reasoning and the source of the data, whether it be planetary movements or from the CERN particle accelerator, does not determine that data’s validity. What is critical is the precision of the measurements and understanding the degrees of uncertainty in the data. In medicine, observational data is frequently limited due to lack of precision in data capture, the fitness of the data for the question being asked, incomplete or missing data, and other data issues that introduce a high degree of uncertainty. So how will we in medicine move from the apple tree on Newton’s estate (shown on the left) to the CERN particle accelerator (shown on the right) and transfer the lessons learned by physics in the 20th century to medicine in the 21st?

To explore this question, JCO Clinical Cancer Informatics has assembled a collection of 17 articles that highlight important advances in medical informatics which serve to improve the utility of observational medical data. The Cancer Data Science and Computational Medicine Special Series review articles were selected to illustrate five aspects of cancer data science and computational medicine: to improve the semantic veracity of data derived from clinical activities, extend the ability to exchange data among users, develop the analytic tools to study the data, better reflect cancer biology, and unleash clinical utility. A sampling of these articles follows.

Cancer Data Models and Data Transmission

Data models help us organize observations into a coherent structure that facilitates data retrieval while simultaneously revealing inherent relationships that exist between data elements. Data models help us understand our world because they require us to think about our data with greater precision and cohesiveness. Cancer data models that incorporate both clinical and genomic information are especially useful in this regard, as are advances in data transmission standards as discussed in these articles:

- Improving Cancer Data Interoperability: The Promise of mCODETM (Minimal Common Oncology Data Elements) Initiative

- Extending the OMOP Common Data Model and Standardized Vocabularies to Support Observational Cancer Research

- OncoTree: A Cancer Classification System for Precision Oncology

- Chemotherapy Knowledge Base Management in the Era of Precision Oncology

- Structured Data Capture for Oncology

Data Governance and Sharing

Cancer data is big data, but constructing data sets with the necessary scale requires data sharing, not only to reach the volume needed for machine learning, but to overcome the risk of biased data sets, or data sets that only reflect a single institution’s unique experience and not the totality of the universe. The standard for observational cancer data has been the U.S. federal National Cancer Institute (NCI) Surveillance, Epidemiology, and End Results Program (SEER) and Centers for Disease Control and Prevention (CDC) National Program of Cancer Registries. The CDC program relies on data collected by each state through their Central Cancer Registry, with each state-based registry functioning as an independent program. Cancer data modernization efforts are now underway at both the CDC and state levels with goals to improve the timeliness of data collection and the bi-directionality of data exchange. Since cancer control and improving patient access to high-quality cancer services are largely state-based through health care delivery sites of care, cancer data needs to be shared with the medical community responsible for patients with cancer. Besides governmental data-sharing systems, medical researchers have formed collaborative groups to share clinical and genomic data. The National Institutes of Health (NIH) has developed a Data Commons informatics platform to support these efforts. Data sharing is discussed in the following articles:

- Pursuing Data Modernization in Cancer Surveillance by Developing a Cloud-Based Computing Platform: Real-Time Cancer Case Collection

- Population Health Informatics Can Advance Interoperability: National Program of Cancer Registries Electronic Pathology (ePath) Reporting Project

- The Next Generation of Central Cancer Registries

- Tailoring Therapy for Children With Neuroblastoma Based on Risk Group Classification: Past, Present, and Future

- The BloodPac Data Commons for Liquid Biopsy Data

Machine Learning and Data Analytics

Cancer data is not only big in terms of volume but also in the diversity of data types: clinical, pathologic, genomic, and socio-economic. Multidimensional data at scale poses computational challenges and the risk of bias from overfitting the data. Data science and computational medicine provide the means with which to discover insights from our data and, through predictive analytics, improve patient outcomes. Two articles review the general field of machine learning and an application of neural networks to generalize learning from one data set to another:

- Machine Learning in Oncology: Methods, Applications, and Challenges

- Unsupervised Resolution of Histomorphologic Heterogeneity in Renal Cell Carcinoma Using a Brain Tumor-Educated Neural Network

Cancer Biology

The American Joint Committee on Cancer (AJCC) cancer staging system, now in its eighth edition, is the most widely implemented cancer classification system in common daily use by clinicians and cancer control professionals. Based upon anatomic measurements, it does not yet reflect advances in genomics, immune-oncology, tumor microenvironment, and human microbiome. However, machine learning applied to image recognition is being actively explored to reveal biologic properties of cancer and to predict cancer behavior as illustrated in:

Clinical Utility

Supporting patient care and medical decision-making can be augmented through capturing and mapping data to data classification systems and providing point of care clinical decision support:

- Computer-Assisted Reporting and Decision Support in Standardized Radiology Reporting for Cancer Imaging

- Clinical Utility and User Perceptions of a Digital System for Electronic Patient-Reported Symptom Monitoring During Routine Cancer Care: Findings From the PRO-TECT Trial

Big data and AI have devolved into fashionable buzzwords used promiscuously and without precision—but they are also precision tools at our disposal. Just as the CERN particle accelerator is a multinational collaborative effort, so the speed with which cancer data science advances will be dependent on the robustness of the cancer data ecosystems we design.

Recent posts